The controversial facial recognition software company, Clearview AI, has settled with the ACLU in an Indiana state court. Will this recent step be enough, or must additional steps be taken to ensure privacy rights in the US and abroad?

Lauren Kook

23 June 2022

Chinese version | French version | German version | Spanish version

In early May 2022, Clearview AI settled with the American Civil Liberties Union in an Illinois state court, agreeing to limit the sale of its facial recognition software and photo database to law enforcement agencies. This follows an Italian government decision in March 2022 which ordered the company to pay 20 million euros in fines for the illegal use of the facial recognition software, and erase all such data gathered within Italy.

The controversial company first faced criticism in January 2020 when the New York Times broke the story about Clearview’s ground-breaking facial recognition app. Since then, there have been a number of lawsuits against the company and censure by politicians and civil rights groups across the globe. Questions of data privacy violations and the legality of “photo scraping”, the method Clearview uses to build its massive database, have taken center stage in the debate as the true reach of Clearview AI is investigated.

Clearview AI claims its use of the photo scraping method is completely legal. The photos in its massive database, which the company boasts contains more than 20 billion images, are only taken from public sources such as public social media profiles. The founder of the company, Hoan Ton-That, claims that many companies are scraping and major firms such as Facebook are aware of this. However, in the aftermath of the New York Times article, many of the major tech companies objected to Clearview’s scraping activities, some going so far as to send cease and desist letters stating it is a violation of their terms of service. One such company was Twitter, stating that scraping was a clear violation of its privacy policy.

Beyond the huge database it has compiled, Clearview’s claim to fame is its software, which it states is more sensitive and efficient than others. One downside, or some may say upside, of most facial recognition software that they are limited in the angles from which they can analyze a photo. Photos or video taken from too high a position, such as from most security cameras, are useless. Clearview’s algorithm has bypassed this hurdle, which has been one of its most important selling points.

The use of facial recognition software has been controversial for some time. Clearview’s most prominent justification for the software is how it can help and has helped law enforcement agencies solve the most egregious crimes. The company claims law enforcement agencies make up the majority of users, although this claim has been disputed by several agencies. Many agencies Clearview claims are its users have stated that they only use the free trial version provided by Clearview, and did not purchase the software for widespread use. Despite this, there are many confirmed users, including the FBI, ICE (Immigration and Customs Enforcement), and metropolitan police departments.

But this in itself is controversial. One of the most prominent concerns regarding this software is how it might be used to identify politically vulnerable individuals, such as identifying individuals at protests and political activists. Instances of facial recognition software being used in this way have already occurred, most notably during the Hong Kong demonstrations. Additionally, studies have shown facial recognition software is less accurate when identifying individuals with darker skin tones. During the Black Lives Matter movement, the use of facial recognition software was criticized as leading to disproportionate false arrests of people of color. This is intensified by the fact that all data concerning the accuracy of the software have been released by Clearview AI not by law enforcement agencies or independent entities.

Clearview has not limited the sale of its software to United States agencies, either. The software has also been used in several European countries including Italy, which ultimately led to the 20 million euro fine against Clearview. Other European countries are assessing the legality of Clearview’s scraping method and use of facial recognition in the context of their own national privacy laws, while the European Union debates whether it violates its General Data Protection Regulation (GDPR). The legality of the software is not immediately clear, which some have pointed out highlights the need for stronger privacy and data protection laws on both the national and EU levels. Beyond Europe, Clearview has allegedly also sold its software to both Saudi Arabia and the United Arab Emirates, despite claims by Clearview’s founder that the company would not sell to governments with records of human rights violations.

In March 2022, Ukraine began using the software in the context of the ongoing conflict with Russia on Ukrainian territory. This comes after the Clearview approached the Ukrainian government, offering free access not only to its powerful facial recognition software but also to its photo database which includes 2 billion images acquired from Russian social media. One of the main uses of the software is to identify casualties. However, the potential uses are many, from identifying perpetrators of war crimes to aiding the reunification of Ukrainian refugees. Clearview AI has not offered the software to the Russian government.

This is only one instance of tech companies offering aid to the Ukrainian war effort. Also in March, Elon Musk’s SpaceX deployed its Starlink satellites over Ukraine, ensuring internet access to the government and helping deter cyber-attacks. While many will applaud the assistance to Ukraine and shudder to think what would happen if Clearview’s software were in the hands of the Kremlin, it sets a dangerous precedent. The question is whether or not these decisions that undoubtably sway global events should be in the hands of private companies, especially those such as Clearview that have a record of selling software to governments with questionable human rights tracks.

While the recent decisions in Illinois and Italy seem to set a direction for the future of Clearview AI and for the use of facial recognition software in general, what is most apparent is that all of this is taking place in unchartered waters. As technological innovation continues to outpace privacy and data protection laws, the need for such laws increases exponentially.

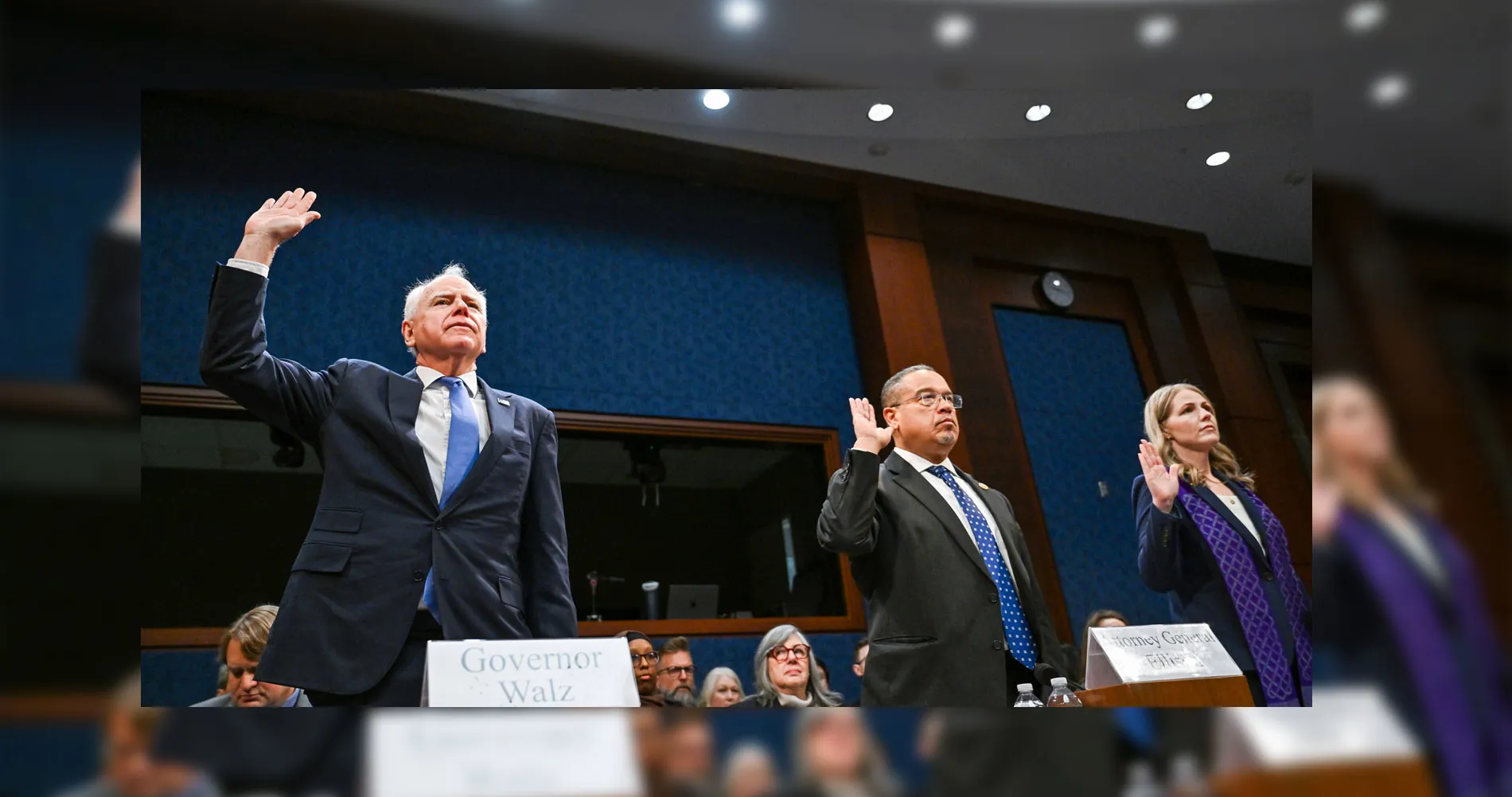

Picture: 29 March 2019, Los Angeles, California, USA, Gate agents assist passengers using facial recognition camera technology to board an American Airlines Group Inc. flight to China at Los Angeles International Airport. © IMAGO / ZUMA Wire

Other Articles Which Might Interest You

Will ChatGPT Make You Low-Skilled?