A growing number of Artificial Intelligence (AI) companies are now under US government scrutiny and lawsuits for the deaths of several teenagers. With each new update of their software, vulnerable and often young individuals are finding ways of using AI to share their inner turmoil, in conversations that have led to self-harm and suicide. Government and concerned parents are anxious to curb reckless release of these sophisticated machines. Whether President Trump’s recent AI Framework EO will provide the urgently required regulation remains to be seen.

Eimhin McGann

15 January 2026

German version | Spanish version

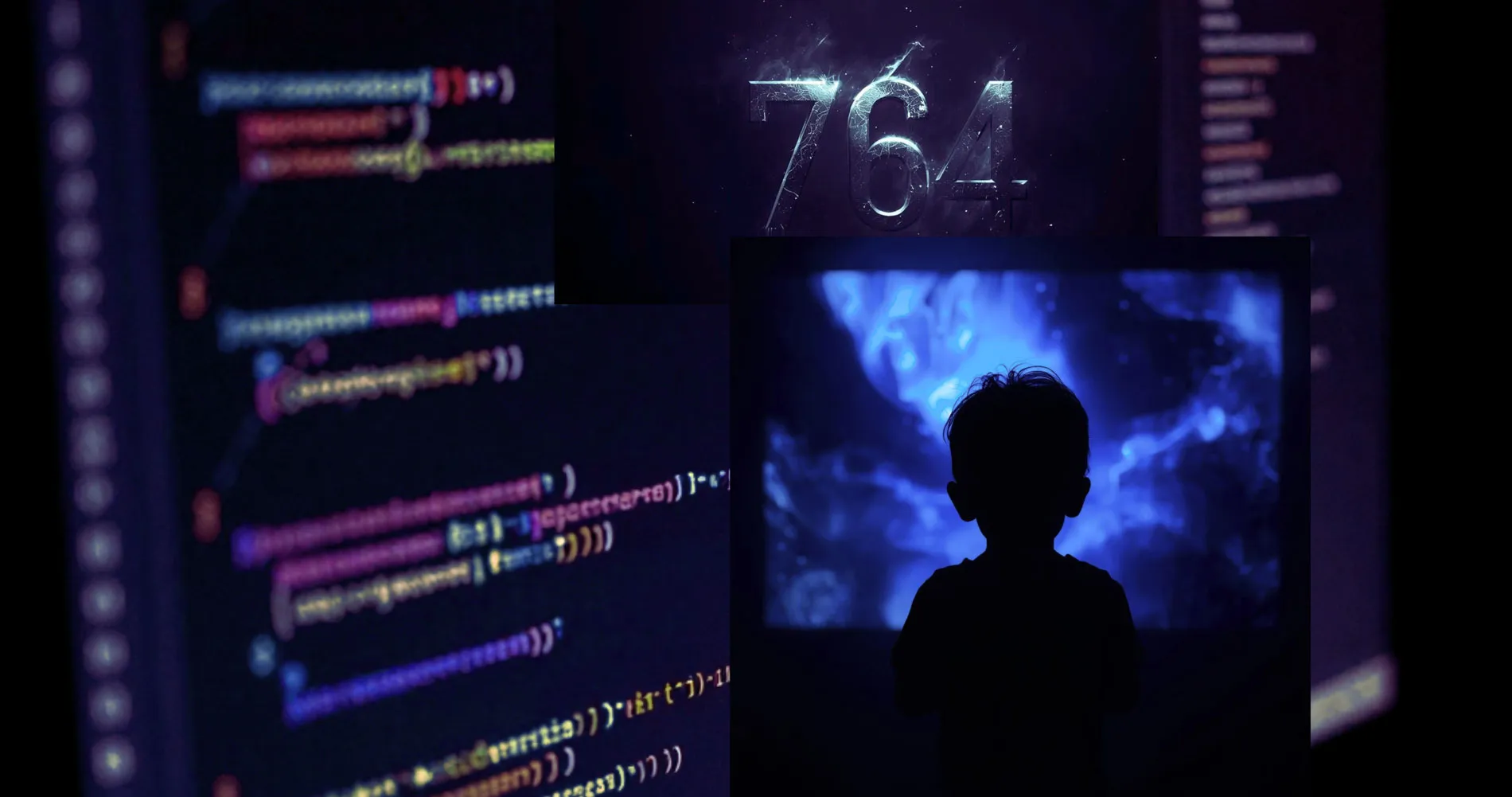

Founded in California in 2021 by former Google engineers Noam Shazeer and Daniel DeFreitas, Character.AI is an artificial intelligence platform, largely marketed towards young people, that allows users to have conversations with chatbots that take the form of famous media characters such as ‘Harry Potter’.

Despite the seemingly innocent concept, Character.AI has become a lightning rod for the debate around the use of artificial intelligence, online safety and mental health, especially of young people. Parents and their children struggle to make sense of a very powerful, unregulated new technology.

Megan Garcia, mother of the 14-year-old Sewell Setzer III who died by committing suicide on 28 February 2024 is suing Character.AI, accusing the company of negligence and wrongful death as well as intentional infliction of emotional distress. Garcia’s son began using the Character.AI platform to converse with fictional characters in April 2023 and quickly became reclusive and obsessed with these artificial relationships. In the weeks before his suicide, Sewell was conversing with a chatbot who resembled the ‘Game of Thrones’ character Daenerys Targaryen, which engaged in what the lawsuit claims was, highly sexual and emotionally manipulative language. Garcia claims that Sewell had mentioned suicidal ideations to the chatbot, which had not responded in an appropriate way. Garcia told CNN that “There were no suicide pop-up boxes that said, ‘If you need help, please call the suicide crisis hotline’. None of that”.

Sewell’s tragedy is not unique and Character.AI is not the only company under scrutiny due to safety concerns. The family of 16-year-old Adam Raine have filed a wrongful death lawsuit against OpenAI, developer of the platform ChatGPT, arguing that the company placed a priority on maintaining the Adam’s engagement with the platform rather than his safety. His family claim that when OpenAI made changes to ChatGPT to “create a supportive environment”, where the user can feel heard when discussing topics like mental health problems, the result was that the platform engaged Raine’s obsessive conversation about suicide, which culminated in him taking his own life in April 2025.

In response to these tragic events, Character.AI has begun to try and improve safety measures, such as increasing the mental health awareness of their chatbots as well as implementing age restriction.

A spokesperson for Character.AI said “We invest tremendous resources in our safety program, and have released and continue to evolve safety features, including self-harm resources and features focused on the safety of our minor users.”

An entirely separate model has been introduced for young people aged 18 or under, which focuses on creative projects like livestreaming. Within this Under 18 version, which commenced on 25 November 2025, minors are prevented from engaging in direct conversations with Character.AI chatbots. The spokesperson also added that the company is working with external organizations, such as Connect Safely, a US nonprofit that educates people of all ages about online safety, privacy, security and digital wellness, to review new features as they are released.

OpenAI also appears to be attempting to make amends, with CEO Sam Altman announcing that they are developing an “age-prediction system to estimate age based on how people use ChatGPT.” If the AI believes the user to be a minor, sexually explicit conversations and topics like suicide are declined.

The US Federal Trade Commission has also launched a teen-safety investigation into Google, Mera, Instagram, Snapchat and xAI, along with Character.AI and Open AI based on reports of suicides and self-harm of young people caused or exacerbated by AI platforms.

These are steps in the right direction, but for the families affected it is too little, too late. Matthew Bergman, Megan Garcia’s attorney and lead attorney at The Social Media Victims Law Centre, has criticized these companies for intentionally releasing their products without sufficient safety features, especially for younger users. “I thought after years of seeing the incredible impact that social media is having on the mental health of young people, I thought that I wouldn’t be shocked,” he said. “But I still am at the way in which this product caused just a complete divorce from the reality of this young kid and the way they knowingly released it on the market before it was safe.”

While Character.AI has offered an apology and begun to implement reforms, in court Bergman asked, “What took you so long, and why did we have to file a lawsuit, and why did Sewell have to die in order for you to do really the bare minimum?”

The case continues and Bergman hopes that Garcia’s lawsuit will pose a financial incentive for Character.AI, and indeed all other AI companies to develop more robust guidelines for their AI’s.

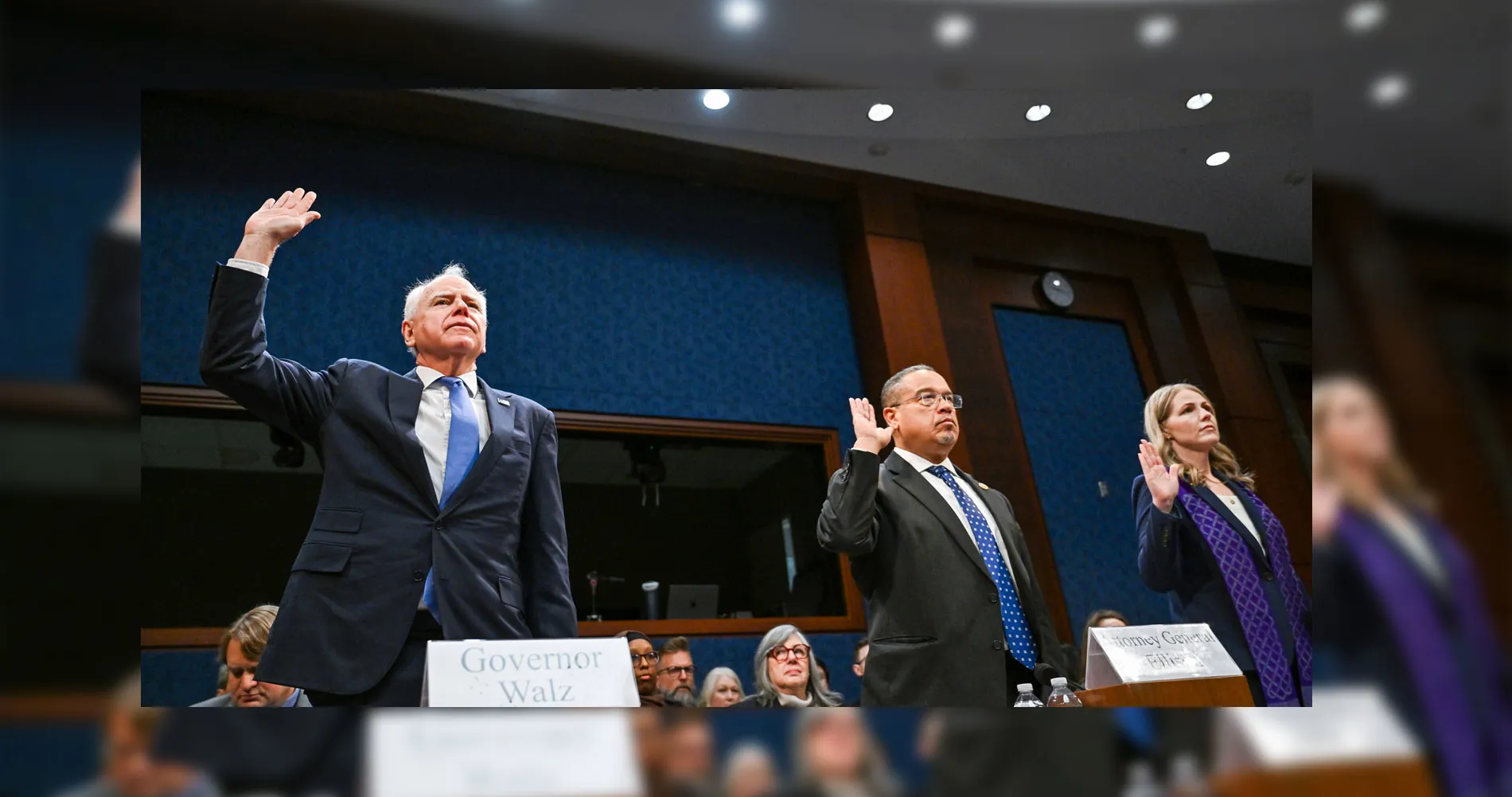

On 11 December 2025, President Trump signed an Executive Order (EO) titled “Ensuring a National Policy Framework for Artificial Intelligence.” The EO declares that America should have a “minimally burdensome” national AI standard rather than 50 different state regimes. It directs his administration to treat aggressive state rules as a threat to innovation, economic growth, and even national security.

Although this move might seem AI friendly, it does elimate the race to the bottom of each state legislating lax AI regulation in order to attract AI companies. Whether Trump’s federal AI Framework will legislate sufficient regulatory protection for young and impressionalbe users remains to be seen. Yet as long as the dangers of AI remain unregulated, the most vulnerable will pay the ultimate price.